Why this matters

Most “leading indicators” in business and economics are themselves downstream of something earlier. Hiring data lags revenue. Revenue lags customer intent. Customer intent, usefully, leaks into search. That is why Google Trends has become a staple in forecasting — it captures a moment in the consumer and B2B attention cycle before it shows up in harder numbers.

But Google Trends is still downstream of decisions. Someone searches for “AI agents” because they have already heard the phrase, seen an ad, listened to a colleague explain it, or read a piece about it. By the time the search happens, the language has already diffused.

We asked: is there a signal upstream of search?

The answer appears to be yes. Long-form executive interviews — the podcasts where founders, CEOs, advisors, and senior operators speak for forty-five minutes at a time about what they are actually doing — consistently carry emerging themes well before those themes show up in public search.

The corpus

Our working corpus is 22,237 long-form executive interviews with complete transcripts and publication dates, spanning January 2018 through April 2026. The interviews come from podcasts whose primary format is a single guest in conversation for twenty minutes or more — not news roundups, not panel shows.

Every transcript is classified by the guest's role. For this paper we segment into four cohorts:

| Cohort | Transcripts | Role |

|---|---|---|

| CEO & Founder | 10,012 | Primary cohort — operators setting strategy |

| Advisor & Consultant | 7,739 | Cross-organization synthesis |

| Finance (CFO and finance leadership) | 401 | Small — treated as suggestive only |

| Media Host | 4,085 | Control cohort |

The CEO and Advisor cohorts are the load-bearing sources in the findings below. The Finance cohort is intentionally named but too small in this corpus to draw firm conclusions from. The Media Host cohort is included as a negative control — if the interview signal is coming from operators rather than from the podcast ecosystem itself, media hosts should trail or track, not lead.

The method

For each of twenty themes we care about, we do four things.

- Define the theme as a set of word-boundary keyword patterns. The patterns are deliberately generous (e.g. for agentic AI we match

AI agent,agentic AI,autonomous agent). - Compute monthly mention rate per cohort. For cohort C and theme T in month M:

rate = transcripts in C mentioning T during M / total transcripts in C during M. Rates control for corpus growth over time. - Pull monthly Google Trends for one or two representative search terms per theme, worldwide, from January 2018 through the present, taking the maximum across terms.

- Measure lead in three ways per (cohort, theme): a sustained takeoff month, a forward cross-correlation lag, and a direct lead-to-peak — months between the interview takeoff and the Google Trends peak. Lead-to-peak is the metric we lead with: “we flagged it at X; public interest peaked at Y; we led the peak by Z months.”

All code, data, and charts are reproducible from a single SQLite database and four Python scripts.

What we found

The clean cases — four technology-adoption themes

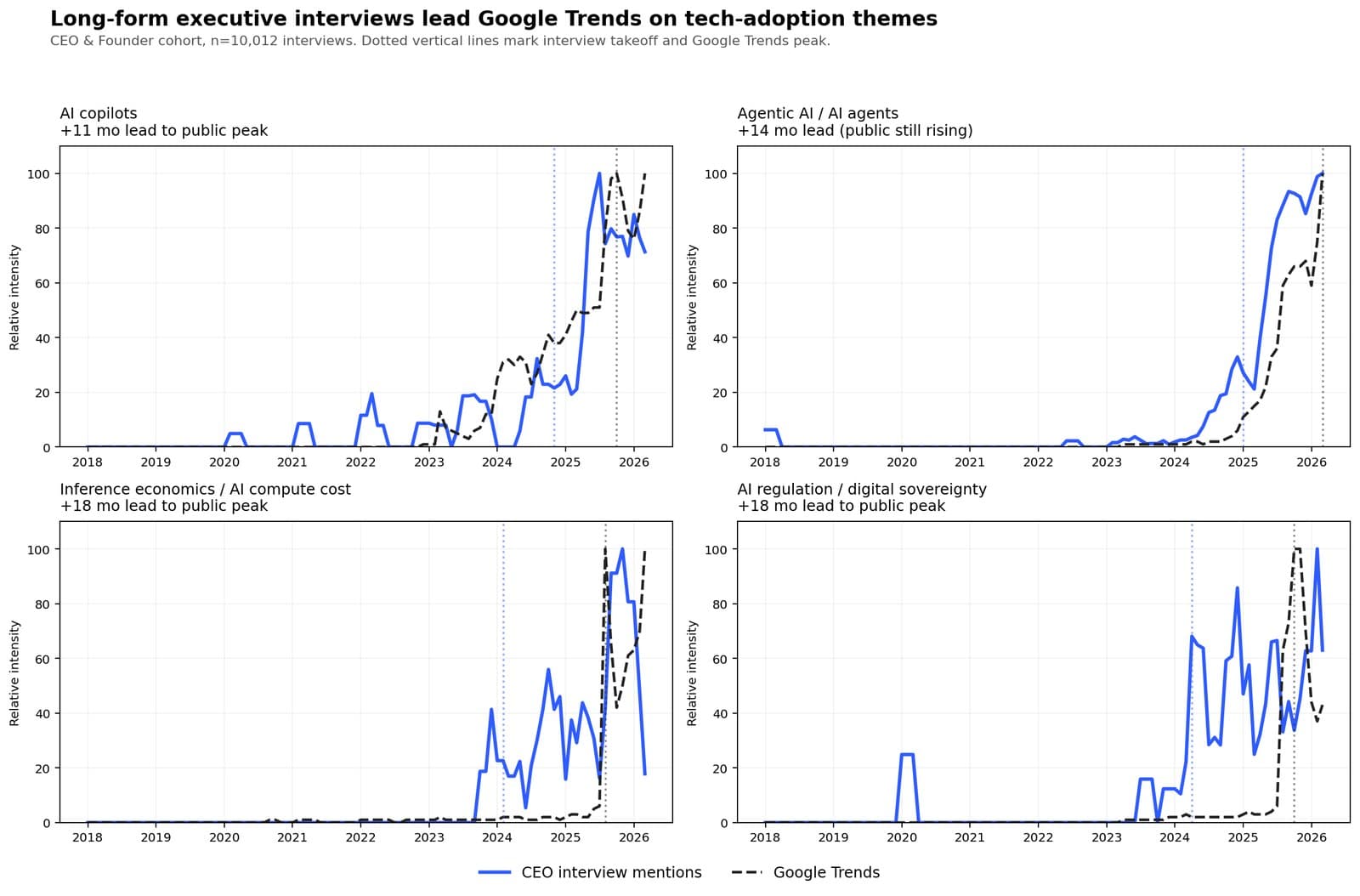

On four technology-adoption themes, the interview signal leads Google Trends with high shape correlation and a measurable lead-to-peak.

| Theme | Interview takeoff | Google Trends peak | Lead-to-peak | Shape r |

|---|---|---|---|---|

| AI copilots | 2023-Q3 | 2025-Q4 | +11 mo | 0.92 |

| Agentic AI / AI agents | 2025-Q1 | 2026-Q1+ (rising) | +14 mo and counting | 0.98 |

| Inference economics | 2024-Q1 | 2025-Q3 | +18 mo | 0.86 |

| AI regulation / digital sovereignty | 2024-Q1 | 2025-Q3 | +18 mo | 0.87 |

Two observations about this table are worth sitting with.

First, the shape correlations are unusually high. On agentic AI, the CEO-cohort mention rate and the Google Trends series move together with r = 0.98 once the interview series is shifted forward by two months. That is not a loose thematic echo. The two series are tracing the same curve, with one consistently earlier than the other.

Second, the lead is longer on the more operational themes. Agentic AI — a customer-facing concept, easy to pilot and demo — leads by about fourteen months. Inference economics and AI regulation — the less glamorous themes about what it costs to run AI and what it is allowed to do — lead by eighteen.

Executives were doing the math on compute bills and the EU AI Act more than a year before the general public started typing either into Google.

Who leads — cohort differences

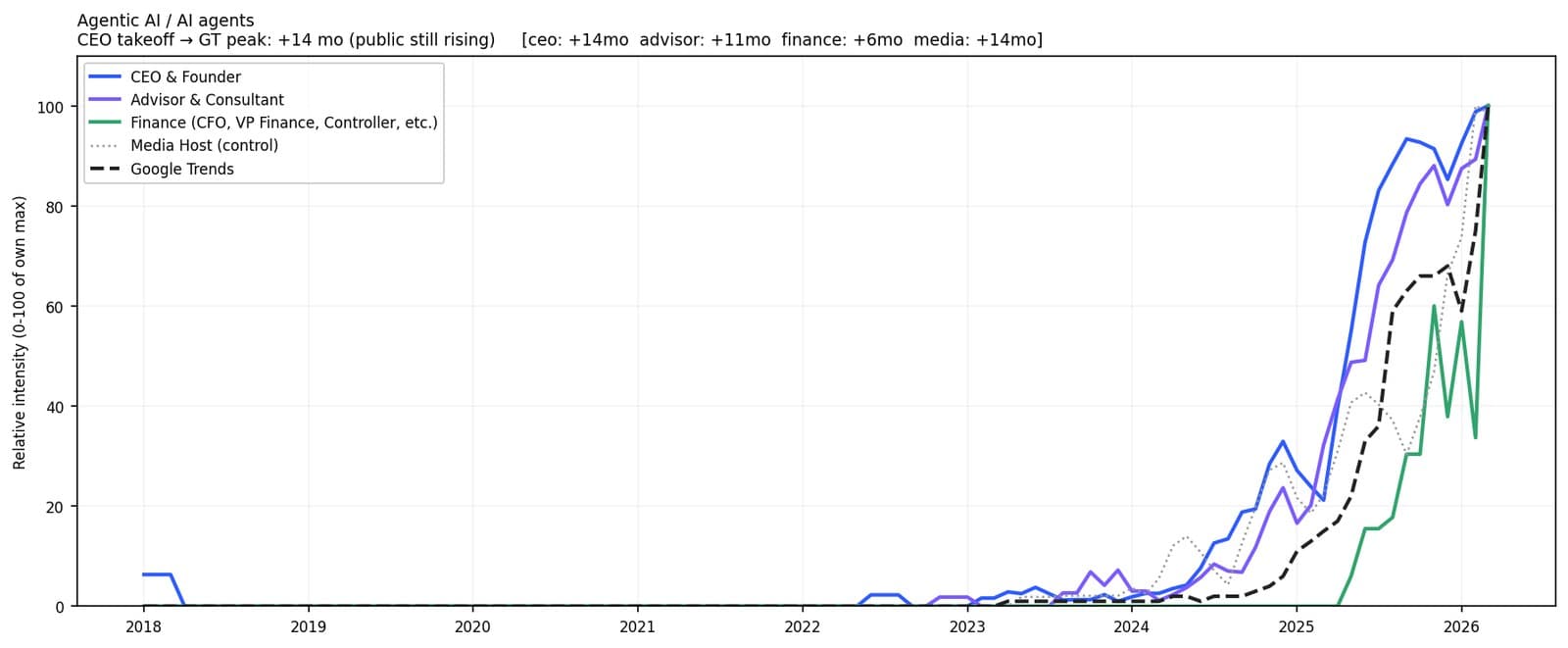

Drilling into agentic AI specifically, with all four cohorts visible, the CEO and Advisor cohorts track each other closely and lead Google Trends together. The media-host control tracks in the middle — these are tech podcasts, so media hosts are also early — but they do not lead the operator cohorts.

The CEO & Founder and the Advisor & Consultant cohorts are approximately tied as the earliest detectable signal across technology themes. On agentic AI they are indistinguishable (r = 0.98 for both). On AI copilots and AI regulation the CEO cohort leads by one to three months over Advisors. Our working interpretation is that CEOs lead on themes tied to their product and market strategy, while Advisors lead on cross-organizational themes that become visible to them before any single operator crystallizes them.

The boundary — what does not lead

Not every theme shows this pattern. On macroeconomic themes — inflation, supply chain disruption, layoffs, hybrid work — no cohort clearly leads Google Trends. The four cohorts cluster together, correlations are weaker, and the lead-to-peak metric loses meaning because executives discuss these themes at baseline continuously.

This is consistent with a plain-language interpretation: executives react to macro conditions; they do not predict them. When inflation spikes, executives talk about inflation; so does the public; both series move roughly together.

| Theme | Best cohort lead | Best r |

|---|---|---|

| Inflation | +5 mo (CEO) | 0.63 |

| Great Resignation / wage pressure | +0 mo (all cohorts) | ≈ 0.50 |

| Supply chain disruption | +15 mo (Advisor) | 0.35 |

| Hybrid / remote work | +24 mo (edge of lag window) | ≈ 0.25 |

These are not null results; they are useful ones. They tell you exactly where this methodology applies and where it does not.

What this evidence supports — and what it does not

The evidence supports:

- On emerging technology-adoption themes, long-form executive interviews lead Google Trends by one to one-and-a-half years with very high shape correlation. Across four themes the metric is stable.

- Backend operational themes (cost, governance) lead further than customer-facing themes, likely because operators face those decisions before the market broadly does.

- CEO/Founder and Advisor/Consultant cohorts are both strong sources. The lift over a Media Host control on technology themes is consistent and visible in the charts.

- The interview signal is not a universal leading indicator. On macro conditions it behaves like shared discourse, not prediction — a boundary that is itself informative.

Honest limits of this claim:

- Correlation, not causation. We show consistent time-shifted correlation. We do not argue that interviews themselves cause public search.

- Retrospective, not prospective. The lead times are measured from series that have already resolved. The consistency across themes is suggestive but not a proof of forward predictability.

- Domain scope. The twenty themes span technology adoption, operating practice, and macroeconomics. The leading-indicator effect is concentrated in the first of these.

- Corpus composition. The interview corpus comes from executive-focused podcasts. Media hosts on these shows track tech themes more closely than they would on a general-interest show, which moderates — but does not erase — the cohort-over-control lift on tech themes.

Why the specific interview format matters

There is a reasonable skeptic's question: why long-form interviews and not something more structured, like earnings calls or press releases?

Earnings calls are scripted. A CFO mentioning AI adoption in Q3 2025 is doing so because investor relations has decided it is a topic worth mentioning, not because the CFO is in the middle of deciding what to do about it. Press releases and marketing copy are even further downstream — they are the announcement of a decision that was made months earlier.

Long-form interviews are different. They are forty-five-minute conversations where guests describe what they are piloting, what they are worried about, what they are evaluating, and what they believe the market is about to do. The format rewards unpolished thinking. It captures decisions in formation, not decisions announced.

This, we believe, is why the lead exists. Interviews capture what is being decided; search, marketing, earnings commentary, and press coverage capture what has already been decided and is being communicated.